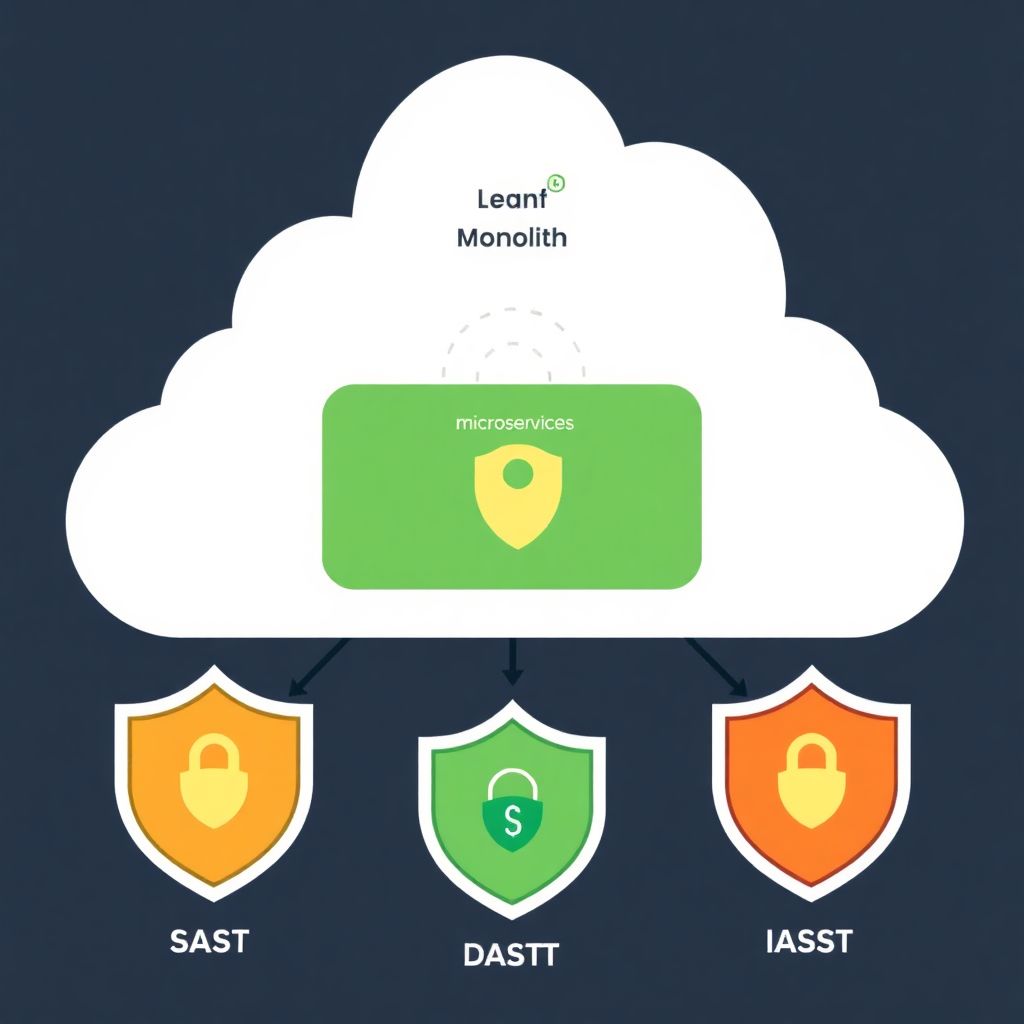

Cloud-hosted applications benefit most from a combined approach: SAST for early code issues, DAST for exposed runtime flaws and IAST where you need deep insight in complex cloud-native stacks. The best choice depends on your architecture (monolith, microservices, serverless), compliance needs, team skills and how tightly you integrate security into CI/CD.

Executive summary: cloud-native SAST, DAST and IAST at a glance

- SAST is the backbone for early detection in code, fitting well into CI pipelines for most aplicações em nuvem, but it cannot see configuration and runtime issues.

- DAST focuses on real HTTP/S traffic and is mandatory to validate what is actually exploitable in your cloud environment, especially on internet-exposed services and APIs.

- IAST adds runtime context using agents; it is powerful for complex microservices but adds overhead and requires careful operations coordination.

- For most teams, a pragmatic baseline is SAST + DAST, adding IAST where critical services or regulatory requirements justify the extra effort.

- Cloud fit depends less on vendor branding and more on integrations with your cloud CI/CD, containers, serverless runtimes and identity stack.

- Start from measurable criteria: coverage, noise (false positives), scan time, impact on pipelines and total cost, not just advertised vulnerability counts.

Cloud hosting threats and constraints that shape SAST/DAST/IAST selection

Cloud hosting changes how you choose ferramentas SAST DAST IAST para aplicações em nuvem because threats and constraints differ from on‑premise environments.

- Ephemeral infrastructure and autoscaling: Instances come and go quickly, so DAST and IAST must handle dynamic endpoints and short‑lived test environments, while SAST must integrate with the pipeline that creates these images.

- Multi‑tenant and shared responsibility: Many findings in aplicações cloud relate to misconfigurations of identity, networking and storage; SAST alone cannot see this, so you typically combine it with DAST and sometimes cloud posture tools.

- API‑first and microservices: You need DAST that is strong at API testing and IAST or detailed logging for tracing flows across services; SAST must understand shared libraries and contracts.

- Serverless and managed services: Traditional DAST that expects long‑lived servers might miss event‑driven flows; IAST agents are harder to deploy, so SAST plus targeted DAST becomes more important.

- Containerized delivery (Kubernetes, ECS, etc.): Security testing needs to plug into image build and deployment stages; IAST agents must work well with containers and sidecars without breaking orchestration.

- Regulatory and data residency in pt_BR context: Compliance (for example, with Brazilian data protection rules) often requires auditable processes; choose ferramentas that generate clear evidence of SAST, DAST and optionally IAST coverage.

- Latency and cost of scans in CI/CD: Long SAST runs can block deployment; DAST and IAST must be tunable for smoke tests versus deep scans, with clear SLAs so developers keep using them.

- Identity, SSO and OAuth/OIDC: Modern cloud apps rely heavily on federated identity; DAST must handle complex logins and IAST agents must respect security boundaries, while SAST rules should handle authorization logic.

Evaluation methodology: metrics, testbeds and bias controls for cloud apps

To compare melhores ferramentas de segurança de aplicações em nuvem SAST DAST and IAST‑style options, build a small but realistic testbed with APIs, web frontends, microservices and at least one serverless function. Run repeatable tests and measure how each approach behaves for your actual workloads.

| Variant | Best suited for | Pros | Cons | When to choose |

|---|---|---|---|---|

| SAST‑first baseline | Teams starting AppSec in CI/CD for cloud‑hosted monoliths or simple APIs | Integrates early in development; no runtime access needed; easier approvals; good for coding standards. | Misses environment‑specific bugs; limited view of cloud misconfigurations; may generate noisy false positives. | If you need fast governance and coding hygiene, and can only deploy one type of scanner initially. |

| DAST‑centric validation | Internet‑facing portals and APIs deployed on Kubernetes or PaaS platforms | Shows exploitable issues; validates auth flows; cloud‑agnostic; supports black‑box security tests. | Depends on stable test environments; less guidance on exact code locations; slower feedback for developers. | When you must quickly improve exposed attack surface and demonstrate real‑world protection on cloud endpoints. |

| IAST‑enhanced runtime insight | Complex microservices with many internal APIs and data flows | High‑fidelity findings with execution context; fewer false positives; maps vulnerabilities to code and service boundaries. | Agent deployment and performance overhead; not ideal for all runtimes (e.g., some serverless); more operational work. | When critical cloud workloads justify deeper runtime telemetry and coordination between Dev, Sec and Ops. |

| Balanced SAST + DAST mix | Most product teams with mature CI/CD and multiple cloud environments | Catches both code‑level and runtime issues; spreads risk; enables shift‑left and pre‑production validation. | More tools to manage; overlapping coverage; requires process design to avoid duplicated noise. | If you want a sustainable middle ground for aplicações em nuvem without immediately managing IAST agents. |

| Cloud‑provider‑native add‑ons | Organizations heavily invested in a single cloud (e.g., only one major hyperscaler) | Tighter integration with cloud IAM, logs and deployment tooling; simpler procurement and networking. | Vendor lock‑in; coverage focused on that ecosystem; features may lag specialized SAST/DAST/IAST vendors. | When consolidation and native integration matter more than having the deepest specialized capabilities. |

To control bias in your comparação de ferramentas SAST DAST para aplicações cloud, keep test data, configuration and timeboxes identical, and include both known vulnerable components and realistic business logic. Track not just number of findings, but also triage effort, false positives and how quickly developers can fix issues.

Feature comparison table: integrations, scalability, accuracy and pricing

Instead of chasing generic rankings of soluções de teste de segurança de aplicações em nuvem DAST IAST and SAST, map features to your cloud use‑cases and constraints. Focus on how each approach behaves under scaling, across regions and within your specific toolchain.

Use the following rule‑of‑thumb mapping for common cloud scenarios:

- If you run a monolithic web app on a managed PaaS, prioritize strong SAST integration into your CI plus scheduled DAST scans against staging and production‑like environments.

- If you run microservices on Kubernetes, look for plataformas de segurança de aplicações em nuvem com SAST DAST IAST that support container‑aware SAST (Dockerfiles, IaC) plus DAST with API support and optional IAST agents for the most critical services.

- If you run mainly serverless functions and managed APIs, select SAST that understands your language and framework, plus DAST that can trigger event‑driven flows; treat IAST as optional, used only where agents are well supported.

- If you handle high‑sensitivity data in multiple regions, emphasize accuracy (low false positives), data residency controls and fine‑grained role‑based access in any SAST/DAST/IAST platform you consider.

When estimating cost and scalability, evaluate:

- Integrations: Native plugins for your Git repositories, CI servers, container registries, cloud pipelines and ticketing tools.

- Scalability: Ability to parallelize SAST scans per microservice, run concurrent DAST jobs for multiple environments and deploy IAST agents without hitting resource limits.

- Accuracy: Historical noise levels for your stack, language support, framework‑specific rules and how fast rules are updated for new cloud attack patterns.

- Pricing model: User‑based, app‑based or pipeline‑based pricing can dramatically change TCO in cloud environments where services and environments multiply.

SAST analysis: code-level coverage, false positives and CI pipeline fit

Use this quick checklist to choose and operate SAST for cloud‑hosted applications.

- Map your languages and frameworks: Shortlist SAST that truly supports your main languages, cloud frameworks and IaC templates instead of relying on generic or legacy rule sets.

- Define acceptable scan times: Decide maximum SAST duration for pull‑request checks and nightly full scans, then benchmark candidate tools against these thresholds before committing.

- Evaluate false‑positive handling: Check how easily developers can suppress or reclassify findings, and whether the tool learns from previous triage decisions across cloud repos.

- Test CI/CD integration depth: Ensure SAST can block builds on critical issues, publish results as code review comments and tag findings by microservice or deployment unit.

- Include infrastructure‑as‑code: Prefer SAST engines that scan Terraform, CloudFormation or Kubernetes manifests alongside application code to catch cloud misconfigurations early.

- Plan multi‑repo coverage: For large cloud programs, confirm that SAST can handle many small repositories, shared libraries and templates without complex manual configuration.

- Align with developer workflows: Pilot with a few squads and gather feedback; if SAST is seen as blocking or noisy, tune rules and thresholds before wide rollout.

DAST vs IAST in runtime: behavioral detection, agent trade-offs and telemetry

Teams often make similar mistakes when selecting DAST and IAST for aplicações em nuvem.

- Relying only on unauthenticated DAST, which misses most business‑logic and authorization flaws behind login screens and APIs.

- Underestimating the effort to model complex authentication and multi‑factor flows in DAST, leading to shallow test coverage.

- Deploying IAST agents everywhere without performance tests, instead of starting with a few critical cloud services.

- Ignoring runtime telemetry and logs; IAST is powerful only if you actively analyze and act on the context it provides.

- Expecting IAST to replace both SAST and DAST; in practice it complements them and is most valuable where complexity is highest.

- Not involving operations and SRE teams when planning IAST deployment, resulting in rollout delays and friction.

- Running DAST only in production, which complicates safe testing; staging or ephemeral review environments in the cloud work much better.

- Failing to align rate limits and scan windows with autoscaling and WAF rules, causing noisy alerts or blocked DAST traffic.

- Assuming any single DAST/IAST product will natively understand every proprietary API or message format used in your cloud estate.

Decision tree for selecting SAST/DAST/IAST combinations by application architecture

Use this mini decision guide before making tools or platform commitments:

- If your main system is a monolith on a managed PaaS, start with strong SAST in CI and add DAST for key user flows.

- If you run microservices on containers, adopt SAST + DAST as a baseline and consider IAST for the most critical internal APIs.

- If your workloads are mainly serverless, rely on SAST plus carefully scripted DAST; introduce IAST only where runtime support is mature.

- If you need fast compliance evidence, choose a platform that consolidates SAST and DAST reports and supports clear audit exports.

For most cloud‑hosted monoliths, the best balance is SAST integrated into CI plus scheduled DAST against stable environments. For microservices and complex APIs, combining SAST, API‑aware DAST and selective IAST brings the strongest coverage. For highly event‑driven serverless apps, tuned SAST with focused DAST flows generally delivers the best return.

Operational and procurement questions for cloud security testing

How should I prioritize SAST, DAST and IAST if I have limited budget?

Start with SAST integrated into CI to catch issues early, then add DAST for exposed web and API endpoints. Consider IAST only for critical, complex workloads where runtime context significantly improves accuracy and justifies higher operational effort.

Where should DAST scans run in a cloud environment?

Run DAST primarily against staging or pre‑production environments that closely mirror production, with realistic data and auth flows. Use limited, well‑coordinated scans against production only when necessary and approved, observing rate limits and change windows.

What teams should own SAST, DAST and IAST operations?

Development teams should own day‑to‑day SAST usage, supported by AppSec. Security typically orchestrates DAST and defines policies, while operations or SRE must be involved for IAST agent deployment and runtime monitoring.

How can I avoid scan times blocking my CI/CD pipelines?

Split scans into fast checks for pull requests and deeper scans on nightly or pre‑release pipelines. Tune SAST rules, scope DAST tests and schedule IAST‑based deep dives so that developers get quick feedback without delaying frequent cloud deployments.

What metrics are most useful to track tool effectiveness?

Measure vulnerability remediation time, false‑positive rate, scan duration and percentage of services covered. Track how many findings come from SAST versus DAST or IAST and how often they lead to real code or configuration changes.

How do cloud provider native tools fit with third-party SAST/DAST/IAST?

Cloud‑native tools often excel at infrastructure and configuration checks and can complement specialized SAST/DAST/IAST products. Many organizations combine native posture management with third‑party application security testing to balance depth and integration.

How frequently should I review and retune my testing setup?

Reassess your mix of SAST, DAST and IAST at least when you introduce major architectural changes, such as adopting serverless or new cloud regions. Also review rules and thresholds whenever developers complain about noise or missed issues.